Edge AI Chips Take to the Field

Semi makers big and small are vying to make AI at the edge a reality. But, scoping out the best applications and moving machine learning models successfully to silicon can be daunting.

July 28, 2021

Airplanes and automobiles, databases and personal computers – all entities with ubiquitous form factors today, but that started out with diverging architectures.

So it’s not surprising that the shape of edge AI chip technology is similarly diversified. These are nascent days for AI chips. And with numerous designs in the market, there’s unlikely to be a common architecture anytime soon.

Today, established vendors and startup chip houses alike have jumped into the fray in a bid to complement or displace conventional microprocessors and controllers. Users gain with AI analytics at the edge or on device, without requiring data processing round-trips to cloud computing services.

But the constraints on hardware devices – individual chips or more complex chip combos known as Systems on a Chip, or SOCs — that process AI algorithms locally are daunting, especially in ambitious IoT engineered for low-power consumption and device costs.

TinyML – a type of machine learning that shrinks deep learning networks, and the data required, to fit on smaller hardware — particularly, has arisen as a general set of concepts meant to address : to provide neural processing of machine learning models, conserve chip die area and power consumption, and communicate with cloud computing services when needed.

The edge’s appeal lays in the fact that it’s dispersed and it’s everywhere, however this is also a challenge for engineers to overcome, according to Linley Gwennap, founder and principal analyst at the Linley Group. That is something vendors and users both are now.

“‘Edge is a term that covers a lot of ground — everything from, the edge of the cellular network and cellular base stations, autonomous cars, smartphones, consumer electronics, and smart sensors,” he said. “Obviously, the chip requirements for those different types of products are very different.”

Vendors’ task is to find the best entry points to deploy AI on the edge. For the IoT practitioner, there’s a dizzying array of options to review. One finds such players as the following:

* Atlazo’s AZ-N1 System on a Chip. This system targets “smart tiny devices,” based on a power-efficient ML processor known as Axom ITM/ It’s designed to handle signals efficiently and is built to link up with audio, voice and health monitoring, along with various sensors. Energy-efficient audio processing is the chip’s big play, with a low-power audio CODEC which supports up to four microphones. AZ-N1 also sports Bluetooth connectivity, DC/DC regulators and a bespoke battery charger.

* Mythic’s M1076 Analog Matrix Processor. It does neural computing in the analog domain, rather than the digital domain. The device can run at up to 25 TOPS (trillion operations per second) working in a 3Watt power envelope. Multiple up to 16 M1076s can reside on a PCIe card. Target apps include industrial machine vision, autonomous drones and intelligent surveillance cameras.

* Qualcomm’s QCM6490/QCS6490 SOC. This is one among a wide variety of chips from the established smart phone chip maker. It is based on a Kryo 670 CPU, Adreno 642L GPU and Adreno 633 VPU and includes a Hexagon DSP AI engine. The SOC targets connected healthcare, logistics management and warehousing among other use cases.

There are more – many more – contenders in this area. Among long-established makers of microprocessors and microcontrollers in the edge AI hunt are AMD, ARM, Intel, Maxim, NVIDIA, NXP, STMelectronics, and others. Startups counted in the competition are Blaize, BrainChip, Deep Vision, Hailo, FlexLogic, and others.

For the vendors, it’s a potential money spinner. As sensor data is increasingly handled from the network edge, it is likely that AI processing will spur overall IoT market growth.

A Facts and Factors research report pegged the global Edge AI Hardware Market at an estimated 594 million units in 2019, but expected it to reach 2.16 billion units by 2026. The market was expected to grow at 20.2% (CAGR) from 2019 to 2026. For its part, Deloitte has predicted edge AI chip units will exceed 1.5 billion by 2024. Its estimate suggests annual growth in unit sales of Edge AI chips of at least 20% , more than double the forecast for overall semiconductor sales.

Focused on Signals, Analogs and Balance

Key issues in edge AI are surfaced in the different approaches chip makers employ. Atlazo targets its SOCs at the ultra-low-power AI/ML workspace, which is expected to be populated by “smart hearable” devices. That means, for example, apps that use audio signals for battery-powered health monitoring and activity tracking.

The company’s principals come from a communications chip background, with CEO Karim Arabi having served as a vice president for Qualcomm’s engineering and R&D groups, before starting Atlazo in 2016. AI at the edge will succeed where full functionality is tailored for the job, he said. And it is by no means only about AI/ML processing.

Functionality means “all the way from communication to processing to memory, and to analog interfacing and power management – including recharging — in a single chip,” Arabi said. While others see limits with Bluetooth communication, Arabi sees it as ubiquitous for the IoT edge uses that will most immediately be applied.

While many of TinyML’s successes to date have been in vision systems, Arabi’s Atlazo is on the trail of sensor signals – ones that convey information on vibration, humidity, temperature, pressure. With that in mind, Atlazo build its AI processor to be customized to handle the unique nature of sensor signal types, while achieving necessary power efficiency.

Powerful vision processing is where the additional effort of incorporating AI/ML is likely to pay off first, according to Mike Henry, CEO and cofounder of Mythic. So, this is a first and definite target for the startup.

Like others, Henry points to memory and memory architecture (and related cost and power increases) as the main bottleneck for AI on the edge. Mythic’s solution to the problem is fairly novel. It’s one of a handful of chip houses working on computational analog signal processing, an approach phased out with the rise of digital computing, whose usefulness is now being rediscovered.

“Analog compute is what solves the memory bandwidth challenge,” Henry said, pointing to the benefits of staying in the analog domain for neural net processing.

“We can get extremely high levels of effective memory bandwidth,” he said. “It’s fundamentally solving the memory bandwidth challenge with new technology as opposed to rearranging where the memory and processors go in the digital world.”

The rush to AI acceleration should not obscure a holistic view that includes other aspects of Edge AI chip architecture, according to Megha Daga, director for AI IoT product management at Qualcomm. She notes that the definition of “edge AI” varies widely and says Qualcomm targets chips toward distinct “genres” – such as cameras, devices, gateways – included on the Edge AI spectrum.

“There has to be a balance between the CPU, the GPU and the AI processing. Everything has to be balanced,” she said, emphasizing that application flexibility and efficiency are key needs to be met.

How the balance is set depends on the input, she added. For example, an AI engine might adjust the distribution according to the number of video camera streams.

“Memory needs to be designed in such a way that can cater to the needs of that AI throughput,” she said. “So we do focus a lot on that memory architecture.”

Compiler Arts and Magic

Compilers may create clashing objectives for application developers versus those of hardware vendors. The compiler converts the machine learning model into running code (often executed as some form of neural network) on the edge AI chip. Compilers can be optimized for specific models. That can mean optimizing deep neural net (DNN), convolutional neural nets (CNN) and language or image processing models to exploit a given chip architecture.

Each of these model types may require further optimization. In vision systems built for inspecting parts, for example, the optimization steps might differ from those used in warehouse surveillance.

It’s an art form to create compilers that ingest Python code and execute efficiently on a specific edge AI chip. And that remains true for TinyML also, although there’s much interest at the moment in using machine learning to better automate compilation.

The edge AI chip vendor is faced with a familiar conundrum. It’s always been the case that general-purpose processors can target a wide market, but their opportunity becomes restricted as they are specialized for specific use-cases, as analyst Gwennap noted in a keynote earlier this year at the Spring Linley Group Processor Conference.

That trade-off affects the outlooks of users too, of course.

If implementers want to embed AI in a product, they could try the existing chips they’re already using., Gwennap told IoT World Today. That system may center on a microcontroller or digital signal processor, which has been updated to perform some type of machine learning.

If something more complicated is required, “you probably want to get a chip that’s been designed and optimized to do AI,” Gwennap said. The user’s job is to match one in a slew of companies’ AI-specific IC makers different techniques.

“It’s really a question of [whether to] use a chip designed for AI, or use what you’ve already got,” he said.

“The good news is there are a lot of standard open-source AI models that are available. If you can adapt them to your requirements, you can certainly do a lot of things without being an expert in AI,” he continued.

For significantly differentiated applications, however, a company may well have to build and integrate their own neural network model, Gwennap added. And getting that to run as designed, on a given piece of silicon, could very well take a considerable amount of effort.

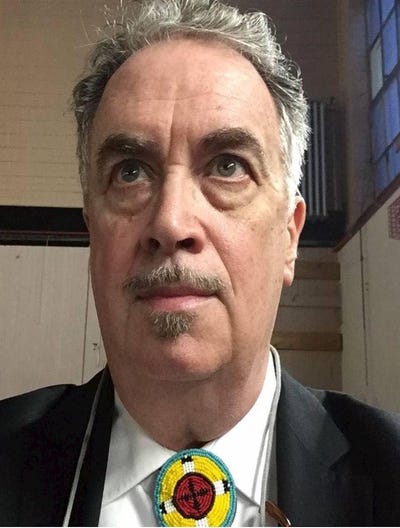

About the Author

You May Also Like