Q&A: Intel Upbeat about Edge and IoT Market

An Intel exec explains how the firm was able to offer a dramatic performance boost in its latest vision processing unit, and dishes on the IoT market.

November 14, 2019

In the early 2010s, the Internet of Things captivated the imagination of a handful of analysts, researchers and vendors. Along the way, the prediction that there would be 50 billion connected devices by 2020 took hold and was repeated endlessly, like a bad pop song. That prediction and other permutations of it related to the IoT market continue to pop up: 25 billion by IoT devices by 2021, 29 billion by 2022, one trillion by 2035…

“Who cares?” asked Jonathan Ballon, vice president of Intel’s Internet of Things Group.

What Ballon does care about it is the value IoT projects generate — in the form of data as well as dollars. “We play at the higher end of the market. Most people think of IoT as low-cost MCUs and dumb data sources on the billions of devices,” he said. “That’s not our game. We believe that in five years, we (humans and machines) will create 10 times more data than we did this year.” And he expects 70% of that data to be created at the edge. “And only half of that data is going to make it back to a public cloud. The rest is going to be stored, processed and analyzed at the edge. And that requires a whole different approach to hardware systems.”

[IoT World is North America’s largest IoT event where strategists, technologists and implementers connect, putting IoT, AI, 5G and Edge into action across industry verticals. Book your ticket now.]

Speaking of hardware, the company recently unveiled new products at its Intel AI Summit, while also divulging that it expects its AI portfolio to generate more than $3.5 billion revenue this year. It announced a Nervana neural network processor for training known as NNP-T1000 and another for inference, called NNP-I1000. Intel also unveiled its Movidius Myriad Vision Processing Unit, code-named Keem Bay, for edge media, computer vision and inference use cases. Offering a roughly tenfold performance improvement over the previous generation product, Keem Bay is significantly faster and up to six times more power-efficient than rivals.

At the Intel AI Summit, we had the opportunity to sit down with Ballon, who shared his thoughts on everything from Metcalfe’s law to his thoughts on edge computing to the next-gen Movidius Myriad vision processing unit, codenamed Keem Bay. The responses have been edited for brevity.

You’ve been active in Internet of Things roles for a while. How do you think the paradigm for connected devices evolved in the past decade or so?

Ballon: I was at Cisco for a long time, and we talked about Metcalfe’s law, a mathematical formula that was developed by Bob Metcalf in the 1980s. [Ed note: In essence, Metcalfe’s law holds that the value of a network is proportional to the square of the number of devices or users.]

The idea applied to Ethernet ports, but then in the mid-2000s, social networking started getting popular, and then, around 2008 or 2009, people started doing a lot more with machine learning. We had this idea that: ‘Anything that can compute and connect to the network will.’ At that point, it was just computers and phones. So we thought: ‘Wow, when all of these devices connect, it will create a Cambrian explosion of value.’

And so every year we would, we would write our point of view. And talk about this upcoming thing, and it never happened. The only people that were talking about the Internet of Things were academics and analysts, researchers, and systems integrators.

But Sand Hill Road or big companies weren’t investing in any IoT companies.

How did you transition to become the chief operating officer and co-founder of GE Digital in 2012 to 2014?

Ballon: One day, Jeff Immelt [former chief executive officer of GE], called and said: ‘I think software and analytics is the future of our businesses.’

I thought: “‘Yes, this is the opportunity to catalyze the industry.’

What’s compelling about GE is that the markets in which they serve are these large industrial markets. It’s not a consumer market. The stakes are much, much higher.

So I did that for a couple of years and realized: ‘Okay, mission accomplished. We’ve catalyzed the industry. People now understand the Internet of Things and, more specifically, the opportunity that the industrial internet creates.’

What led you to Intel?

Ballon: Intel had an embedded silicon group. They were taking silicon that had been designed for a data center, designed for a PC, phone, tablet or network, and they were making it relevant to IoT customers. And that meant doing a number of things: one that had to span a huge range of temperature, and it had to be able to operate 24-7 for years. In some cases, you had to be able to support that and be able to ensure that it works. But it went way beyond that. You need to be able to deliver specific functionality related to safety. In the case of a robot, for example, it needs to shut down in milliseconds if it is at risk of endangering its operator. It also means having functionality like time coordinated computing, so if you have robots on a manufacturing line, they’re coordinated within 10 milliseconds of each other for high-precision work. You also have things like time-synchronized networking. All of these are things that most data-center and PC-network customers don’t think about, but IoT customers absolutely do.

About five years ago, Intel decided to create an IoT Group within the company, meaning it was going to publicly report the financial statement and be governed by SEC regulations. That legitimized it within the company.

Since then, we’ve done a tremendous amount of things in terms of hardware innovation, software innovation, tools and especially investment in our ecosystem to bring IoT to scale. This year, we’ll do $4 billion in revenue on $5 billion in sales.

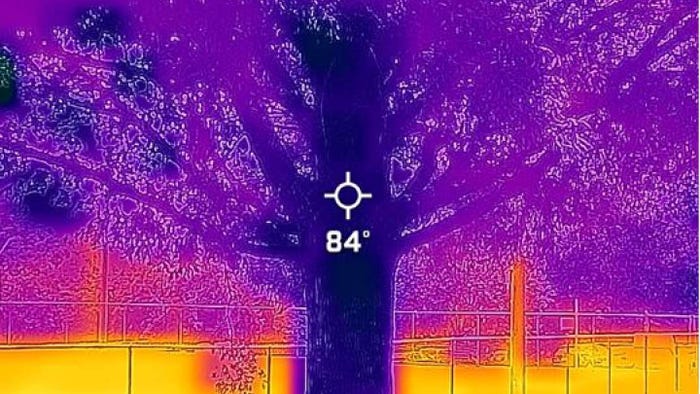

We care about the value IoT devices generate. The volume of data is growing exponentially, and 30% of this data needs to be processed in real-time. And there’s a variety of data. It’s not just machine data. It’s video, it’s voice and other audio. And you’re getting into more sophisticated different data types, like hyperspectral imaging and 3D imaging.

How do you relate IoT to edge computing?

Ballon: For us, edge and IoT are intertwined.

We’re embarking on the emergence of a truly distributed computing architecture — the device through the network to the cloud — where data and compute and analytics and value will move to wherever the optimal point in that architecture is.

And this is not a new concept. In the 1970s, academics were talking about distributed computing architectures. And it wasn’t until 1997 when Eric Schmidt, who was then the chief technology officer at Sun Microsystems, said famously: ‘When the network becomes as fast as the processor, the computer hollows out and spreads across the network.’ To me, that’s the definition of distributed computing, and it applies to edge, cloud and IoT. It’s not a pendulum swing one way or another. It’s ubiquitous computing.

Here are some great metrics. We’ve deployed 500 factories this year for machine vision systems for quality control, defect reduction and things like that. And when you look at the amount of data that resides locally in the factory versus what we send to the cloud, the ratio is 2000-to-1. In our autonomous driving business, MobileEye, the ratio is 400,000-to-1.

Can you explain at a high level what enabled the high-performance Movidius Myriad Vision Processing Unit, also known as “Keem Bay?” It offers more than 10 times the inference performance of previous Movidius VPU while drawing a fraction of the power.

Ballon: Here’s the metaphor that I think about. When people started running competitively in the late 1800s, they wore leather shoes. And here we are, more than 100 years later, and, last month, someone ran a marathon in less than two hours for the first time. He wasn’t wearing leather shoes. He was wearing highly engineered footwear from Nike that was purpose-built for running a marathon. And I think that’s one of the overarching themes of today, which relates to this idea of Keem Bay. You have to build purpose-built products for the application.

For a long time, people have equated AI with GPUs. And a GPU is great at some things, but not everything. Just like you wouldn’t go running in leather shoes, you’re not going to throw a GPU and an inference workload at the edge. It’s just not the right thing. So Keem Bay has a brand new architecture. It is groundbreaking because it was, from the ground up, built for edge inference.

Keem Bay can be optimized for customers that want to use our toolkit, OpenVINO. If customers are willing to use our toolkit, they get 50% more performance. You can only do that with something that’s been engineered with the task at hand.

What kind of development process was behind Keem Bay?

Ballon: We had 1,000 people working on that chip. We started working on it a year and a half ago.

We’ve got two more chips to follow in the development phase now. Our goal is to be at a one-and-a-half year cadence for this type of innovation. Probably within two years, we’ll be at an annual cadence.

Intel acquired Movidius in 2016. How did Intel integrate the technological background from both companies to create the Movidius Myriad Vision Processing Unit?

Ballon: When we acquired Movidius, this was largely a camera technology. A couple of customers like Magic Leap wanted to use it for AR/VR, like Magic Leap. It also goes into drones. DJI is a huge customer.

When we got the Movidius technology in-house, we could take the ingenuity of these Movidius engineers, and combine it with what some of the Intel people knew. All of the sudden, we knew we could develop this architecture into something that spans from a chip in a camera into something that goes into robots, kiosks, retail systems and MRI machines. It was pretty amazing.

So the integration process with Movidius was straightforward?

Ballon: There was that period in the beginning, similar to when you bring home a new pet. You have to get used to them. They have to get used to you. But eventually, we grew to love each other.

Where do you see the edge and IoT market next year? Intel’s most recent Form 10-K said the IoT Group “achieved record revenue and operating income in 2018 on broad business strength and growing demand for edge computing and computer vision-based applications.”

Ballon: If you look at the market for edge inference, the demand for edge inference is bigger than the market for cloud and data center inference and training combined. It’s massive. And it is massive because 75% of inference devices are going to be at the edge because that’s where the data is. So follow the data. And that’s where you find the opportunity. That’s where you find the money.

About the Author(s)

You May Also Like

.png?width=300&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)