Robot Learns to Respond to Human Facial Expressions

The Columbia University design uses AI to predict and mimic human facial expressions to improve human-robot interactions

Columbia University engineers have designed an AI-enabled robotic face that can anticipate and mimic human expression, which the team said is a “major advance” in human-robot interactions.

While programs such as ChatGPT are increasingly used to improve robots’ verbal communication skills, researchers are still developing their physical communication, such as facial expressions and body language.

The team said their design, dubbed Emo, is a step forward in this field.

Developed by the university’s Creative Machines Lab, Emo can anticipate facial expressions and greet humans with a smile, with the team saying it can predict a smile 840 milliseconds before it happens.

The robot is equipped with 26 actuators covered by soft silicone skin, with high-resolution cameras integrated into its eyes to allow it to make eye contact.

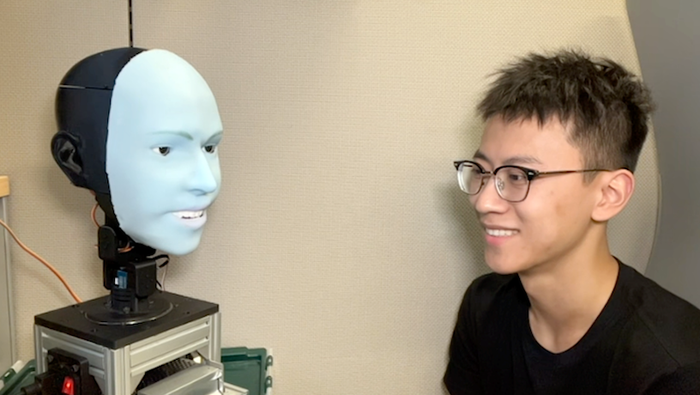

Yuhang Hu of Creative Machines Lab face-to-face with Emo. Credit: Creative Machines Lab

To train the robot, the team developed two AI models: one that analyzes changes in a human’s face to predict their facial expressions and another that generates motor commands depending on the corresponding facial expressions.

Over a few hours, the robot learned the correlation between facial expressions and motor commands, a process the team called “self modeling”. After training, Emo could predict people’s facial expressions by identifying small changes in a person’s face.

“I think predicting human facial expressions accurately is a revolution in human-robot interaction,” said Yuhang Hu, study lead author. “Traditionally, robots have not been designed to consider humans' expressions during interactions. Now, the robot can integrate human facial expressions as feedback.

“When a robot makes co-expressions with people in real-time, it not only improves the interaction quality but also helps in building trust between humans and robots. In the future, when interacting with a robot, it will observe and interpret your facial expressions, just like a real person.”

“Although this capability heralds a plethora of positive applications, ranging from home assistants to educational aids, it is incumbent upon developers and users to exercise prudence and ethical considerations,” said Hod Lipson, lead researcher. “But it’s also very exciting -- by advancing robots that can interpret and mimic human expressions accurately, we're moving closer to a future where robots can seamlessly integrate into our daily lives, offering companionship, assistance, and even empathy,

“Imagine a world where interacting with a robot feels as natural and comfortable as talking to a friend.”

About the Author

You May Also Like