Nvidia CEO Touts H200 Chips as 'Second Wave of AI' Looms

At an AI conference, the Nvidia CEO says new chips could cut inferencing costs in half. He also praises open-source work

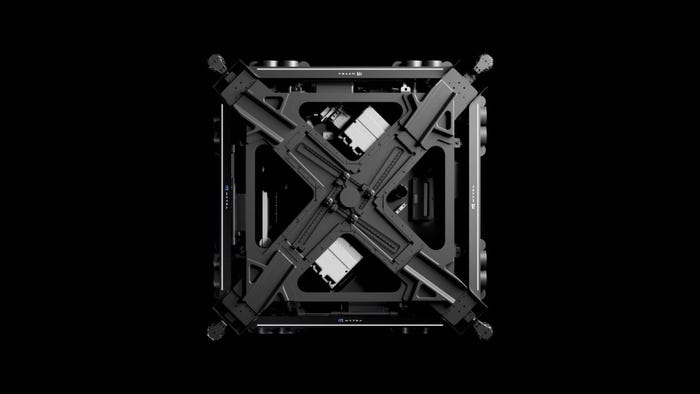

Nvidia CEO Jensen Huang said the chipmaker is “ramping up” plans to improve inferencing performance through its new hardware lines, fresh on the heels of unveiling new H200 chips.

Speaking at the recent ai-PULSE tech conference in Paris, Huang said the new H200 chips can double the performance of inferencing “without changing anything" - the system or the software stack.

The H200 chips were unveiled recently as the successor to Nvidia’s flagship H100s. The company CEO said the improved performance would reduce costs for users by half.

Nvidia is also stepping up its plans for the Grace Hopper 200 Superchip, with the new hardware able to expand chip interconnections from eight GPUs to 32.

“That's essentially saying we're going to build ... a virtual giant GPU,” Huang said.

‘Every Country Needs to Build Their Sovereign AI’

Huang also spoke about the rise in companies building AI. While early adopters came from the U.S., he said the next wave should see “a recognition that every region and every country needs to build their sovereign AI.”

“All the different countries in Europe, they have their own language, they have their own culture that really wants to get captured in their foundation model,” he added. “The different companies and different industries that benefit from AI are different than the consumer internet companies here in the U.S.”

Huang said that the second wave of AI will be the expansion of generative AI around the world and the adoption of AI in different industries, “not just internet service companies, but to the largest of industries, the traditional industries.”

Europe Champions Open-Source AI

Europe is home to some of the champions of open-source AI. Huang mentioned Demis Hassabis, CEO of Google DeepMind and Yann LeCun, Meta's chief AI scientist. The Nvidia CEO also said he was “a huge fan.”

“Without open source, how would AI have made tremendous progress in the last decade? You have to ask yourself, 'If not for Llama 2, if not for the exciting Mistral 7-B, how would the energy around generative AI be as great as it is across all of the industries beyond just a couple of companies?'," he said. "The ability for open source to energize the vibrancy and pull in the research and the engagement of every startup, every researcher, every industry, is really quite vital.”

Nvidia is among the backers of Hugging Face, the open-source AI platform where many of the major models he mentioned are housed. Huang said that he “loved” the work that Hugging Face does.

Hugging Face "keeps the vibrancy of the ecosystem, the ability for us to do research, not just for the innovation of models, but the safety of the models, the guard-railing, the fine-tuning all of the different ways that we could keep AI safe and responsible. That technology needs to be innovated in open space," Huang said. “Open source, both the technology of it, the culture of it, and I think the social engagement of it is really essential, quite vital.”

Read more about:

AsiaAbout the Author(s)

You May Also Like

.jpg?width=100&auto=webp&quality=80&disable=upscale)

.jpg?width=400&auto=webp&quality=80&disable=upscale)