MIT Reveals Groundbreaking Simulator for Autonomous Vehicles

The data-driven simulation engine helps vehicles learn to drive in the real world and recover from near-crash scenarios

June 23, 2022

Researchers at the Massachusetts Institute of Technology (MIT) have created a world-first open-source photorealistic simulator for autonomous driving.

VISTA 2.0 is described as “a data-driven simulation engine where vehicles can learn to drive in the real world and recover from near-crash scenarios.” Data-driven means it is built and photorealistically rendered from real-world data.

Currently, this highly advanced simulator is the exclusive domain of some of the companies leading the way in automated driving tech, who rely on data only they have access to – such as edge cases, near misses and crashes – to enable accurate simulations.

Without access to this sort of data, training AI models for autonomous vehicles is difficult. It is clearly undesirable, for example, to deliberately crash cars into other cars just to teach a neural network how to avoid doing so.

But that’s where VISTA 2.0 can change things and break new ground, with its code open-sourced to the public.

“With this release, the research community will have access to a powerful new tool for accelerating the research and development of adaptive robust control for autonomous driving,” said MIT Professor and CSAIL Director Daniela Rus.

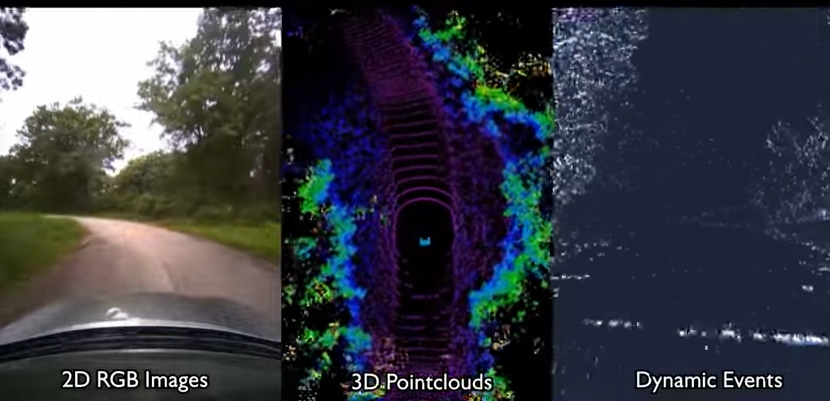

VISTA 2.0 builds off a previous MIT model, VISTA, but moves things on significantly. While the initial iteration of VISTA supported only single-car lane-following with one camera sensor, VISTA 2.0 can simulate complex sensor types and interactive scenarios and intersections at scale.

Among the driving tasks VISTA 2.0 can recreate and scale in complexity are lane following, lane turning, car following, static and dynamic overtaking, as well as road scenarios where multiple agents are involved.

“This is a massive jump in capabilities of data-driven simulation for autonomous vehicles,” said Alexander Amini, computer science and artificial intelligence laboratory Ph.D. student and co-lead author on two new papers, together with fellow Ph.D. student Tsun-Hsuan Wang.

“VISTA 2.0 demonstrates the ability to simulate sensor data far beyond 2D RGB cameras, but also extremely high dimensional 3D LiDARS with millions of points, irregularly timed event-based cameras, and even interactive and dynamic scenarios with other vehicles as well,” Amini said.

Lidar sensor data has traditionally been hard to interpret in a data-driven world as it effectively requires trying to generate brand-new 3D point clouds with millions of points from sparse views of the world.

To synthesize 3D Lidar point clouds, the MIT researchers used data collected from a car, projected it into a 3D space, and then let a new “virtual” vehicle drive around locally from where the original vehicle was. Finally, they projected all of that sensory information back into the frame of view of the new virtual vehicle, with the help of neural networks.

Combined with the simulation of event-based cameras, VISTA 2.0 was capable of simulating the multimodal information in real time — making it possible to train neural nets offline but also test online on the car in augmented reality set-ups.

“The central algorithm of this research is how we can take a dataset and build a completely synthetic world for learning and autonomy,” said Amini. “It’s a platform that I believe one day could extend in many different axes across robotics.

“We’re excited to release VISTA 2.0 to help enable the community to collect their own datasets and convert them into virtual worlds where they can directly simulate their own virtual autonomous vehicles, drive around these virtual terrains, train autonomous vehicles in these worlds, and then can directly transfer them to full-sized, real self-driving cars,” he said.

About the Author(s)

You May Also Like

.png?width=700&auto=webp&quality=80&disable=upscale)

.png?width=700&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)