How data analysts should exploit edge data models for real-time data insight but also enlist the cloud for less time-sensitive tasks.

September 9, 2020

Key takeaways from this article include the following:

Edge computing offers new opportunities for data analysis and modeling in real time.

IoT edge data models, however, require less monolithics approaches to data lakes and modeling.

Data analysts need new skills to be able to properly categorize, ingest and then manage this data at the edge and in in the cloud.

Enterprises are eager to use IoT data to understand costs, operations and their future prospects.

The data generated by mobile devices and Internet of Things devices (IoT) enables organizations to reduce costs, improve operational efficiency and innovate. But only if organizations can capture meaning from data at the right time.

Providing meaning and context to data, in turn, makes data analysts responsible for building data models that can deliver that meaning. Further, that data is high volume and coming at great speed, from many disparate locations.

In turn, data analysts should design edge data stores for speed and real-time insight in addition to monolithic data stores, like data lakes, to answer numerous questions for every business unit and purpose.

“Instead of flowing the data from domains into a centrally owned data lake or platform, domains need to host and serve their domain datasets in an easily consumable way,” wrote Zhamak Dehghani in a piece on moving from centralized data lakes for real-time data needs.

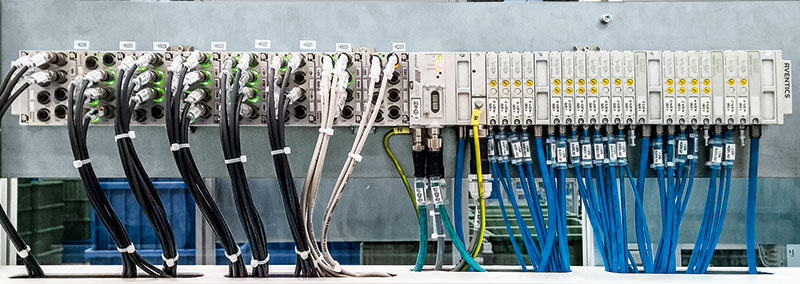

This is particularly true at the edge, where compute processing resources may be at a premium, even as the number of devices proliferates. With edge computing, a data algorithm can run on a local server or gateway, or even on the device itself, enabling more efficient real-time applications that are critical to companies.

“Edge puts that compute power right there, where the action is happening,” said Lerry Wilson, senior director innovation and digital ecosystems at Splunk, a machine learning platform.

A new forecast from International Data Corporation estimates that there will be 41.6 billion IoT devices, or “things,” generating 79.4 zettabytes (ZB) of data in 2025. This data also needs to be aggregated from myriad devices and business units — often siloed and in different formats, which creates additional complexity.

“The complexity inside these organizations has gone beyond the capacity of just keeping these devices up and running,” Wilson said. “You have to be able to see how this device interacts with this device. “From a security perspective, it’s absolutely critical — but also from an operational and business perspective.”

Data Volume and Velocity of Dictates New Edge Data Models

To effectively use edge computing for rapid data processing requires a different approach to data modeling and ingestion, practitioners emphasize. Data analysts need to consider how the data will be used and how much latency can be tolerated as well as its security and storage requirements.

That will require a hybrid approach to data ingestion as well as new edge data models that favor speed rather than overarching, monolithic data models.

“Taking the raw data from hundreds of thousands of sensors and doing minimal processing there and then sending the rest of the data to a centralized location — that approach is going to become more popular,” said Dan Sullivan, principal engineer at Peak Technologies.

So, for example, if IoT data can be used to detect a business-critical anomaly, that data may need to be processed right away. Environmental data over time, however, may not be critical and could be sent to the cloud or an on-premises data center for further processing instead.

At the same time, while decision makers want this data at the ready — fast-data preparation and cleansing can get in the way of fast data processing.

Indeed, nearly 40% of data professionals spend more than 20 hours per week accessing, blending and preparing data rather than performing actual analysis, according to a survey conducted by TMMData and the Digital Analytics Association.

IoT data still requires this preparation, but analysts say that it is now preferable to try to create less monolithic models upfront that require heavy logic data joining. The goal is to ingest data quickly, then query the data in targeted ways.

“Rather than figure out a complex solution, let’s have two simple solutions — rather than one monolithic solution. You don’t try to get one data model to answer all of your questions when they are really different. Because there is so much data, you want to target a subset of the data quickly.”

Sullivan noted that some of the data might also be sent to the cloud for machine learning and algorithm training. That data might not require such low latency or quick turnaround.

“The raw data is important from a machine learning perspective,” Sullivan said. “It doesn’t have the latency requirements that, say, anomaly detection data does. You may fork off that data for machine learning purposes and slowly load that up to a cloud object store.”

This new approach to data modeling echoes a paper on IoT data management, asserting that IoT data models need to be more flexible to accommodate real-time data management and large volumes of data.

“Database models that depart from the relational model in favor of a more flexible database structure are gaining considerable popularity, although it has been shown that parallel relational [database management systems (DBMSs)] outperform unstructured DBMS paradigms.”

IoT Data Management Skills Remain a Hurdle

These kinds of decisions about where the data goes and how to make the greatest use of it require unique skill sets, experts agree.

“Data scientists are … master of all jacks,” wrote Rashi Deshai in an article about the top skills for data scientists. “They have to know math, statistics, programming, data management [and] visualization … 80% of the work goes into preparing the data for processing in an industry setting.” WIth data volumes continuing to explode, data management has become a data scientist skill.

Organizations may lack these skills in-house, however. Either they need to cultivate these skills through additional education and training or enlist third-party partnerships.

“The holdup is going to be with people,” Sullivan said. “There just aren’t a lot of people who understand how to work with data at scale. It’s expensive to do this on-prem, but it requires a lot of engineering experience. People who can write the data-engineering pipelines are needed to extract value from it.”

As another data management indicates, this requires a jack-of-all-trades skill set.

The data echoes a long-standing skills shortage in IT data management.

According to IoT World Today’s August 2020 IoT Adoption Survey, lack of in-house skills is a blocker for IoT projects: 27% of survey respondents said that their lack of expertise was getting in the way of IoT deployments.

Splunk’s Wilson says that silos between operational and information technology departments have also been a holdup, but OT is beginning to see the virtues of automation and machine learning at the edge.

“People are starting to put the tools and the processes in place for real-time machine learning,” Wilson said. “They are embracing the concept — and the work to get there.”

About the Author(s)

You May Also Like

.png?width=700&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)