After acquiring the startup MindMeld for $125 million in 2017, the networking giant is making its core conversational AI platform open source.

May 14, 2019

When conversational interfaces work well, they can seem magical. In a home environment, for instance, a user can speak a single sentence and trigger a suite of actions simultaneously, perhaps launching a party mode with mood lighting and music playing across the house. Adoption in the enterprise sector is farther behind, but conversational AI could potentially be transformative in the customer service arena, with chatbots and voice assistants handling the heavy lifting of routine customer queries while human agents handle more specific questions. Already, a handful of hotels have experimented with using smart speakers to enable guests to, say, request extra towels, pillows or blankets. By 2021, Gartner expects AI in general to process 21% of customer service interactions, four times more than in 2017. ABI Intelligence projects that by next year, roughly eight out of ten businesses will have deployed chatbots, which here include systems capable of understanding either natural voice or text commands. According to projections from Juniper Research described by Retail Dive, chatbot-driven interactions could drive significant cost savings for retailers — to the tune of $439 billion by 2023.

But while smart speakers saw a 78% growth rate in 2018 over the prior year, according to research from NPR and Edison Research, conversational platforms generally are more nascent in the enterprise. Last June, Gartner’s “Market Guide for Conversational Platforms” noted only 4% of businesses had deployed such interfaces.

Part of the reason why the pace of conversational AI has been slow is the underlying software can be challenging to develop. The technology is swiftly moving, and the market fragmented. Large tech companies with substantial resources have tended to create the most advanced conversational AIs, leaving smaller and mid-sized players at something of a disadvantage.

[Internet of Things World is the intersection of industries and IoT innovation. Book your conference pass and save $350, get a free expo pass, or see the IoT analytics speakers at the event.]

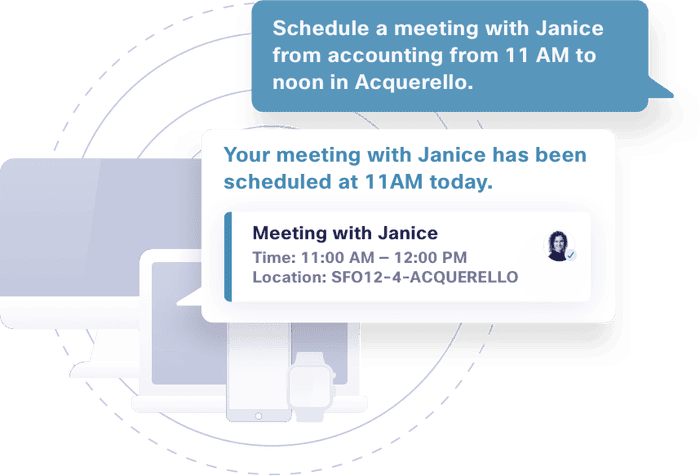

To help drive progress in the market, Cisco recently announced its plan to make its MindMeld conversational Python-based AI platform open source. The company is also releasing documentation targeted at machine-learning engineers in the form of its Conversational AI Playbook.

According to Karthik Raghunathan, director of research at MindMeld, which was a startup Cisco acquired in 2017, the MindMeld conversational platform allows developers and machine learning scientists to focus on the machine learning aspects of their build-out without having to worry about the “plumbing” underlying them.

The platform simplifies the creation of conversational AI apps while also giving machine learning engineers the flexibility to fine tune algorithms, Raghunathan said. “With the MindMeld platform, you should have all the components you need for doing the various steps required in a traditional NLP platform,” he said. “You can use it out of the box if you want. But I think where our platform really shines is in exposing all of the internals.” Engineers wanting to experiment with machine learning models or modify settings can do so. “You have the flexibility to tweak a lot of different knobs to get it to a highly accurate system.”

There are several options for creating conversational AI applications. For instance, an offshoot of XML known as Artificial Intelligence Markup Language and Natural Language Processing (NLP) and Natural Language Understanding (NLU) are important languages. There are also a host of competing cloud-based natural language processing (NPL) APIs and NLP platforms. Google has the NLP engine firm Dialogflow. Facebook has Wit.ai and PyText. IBM’s Watson also has NLP capabilities as does Microsoft Azure. “These are all cloud-based APIs that streamline application development,” Raghunathan said. The APIs “provide you with a simple interface where you can mention a few examples of what kind of utterances your chatbot or assistant is expected to handle,” he added. The platforms make it relatively simple to create a prototype of a conversational AI. “But what we found is the moment you have to do anything more complex — use specialized terminology or showcase deep domain understanding — that’s where it was difficult for us to use those sort of out-of-the-box natural language processing cloud APIs,” Raghunathan said. Another hurdle, he said, is that the machine learning components in these platforms are essentially a sort of black box, making it impossible to tweak their parameters or try out different algorithms.

One solution to that problem is to leverage machine learning libraries from TensorFlow or Python. The algorithms they contain offer state-of-the-art algorithms. “But to get started with them, you need to have something like a masters or Ph.D. in machine learning, or at least some amount of data science expertise to make good use of them,” Raghunathan said.

To use off-the-shelf tools to create conversational Ai systems can require a significant ramp-up time. “The barrier to entry is quite high,” Raghunathan noted. “You need to understand how to use these tools efficiently.”

Another hurdle awaiting engineers who have created successful machine learning models is the transition to creating a production-ready application. Such apps require an array of components separate from the machine learning models. “You need some sort of a language parsing mechanism, you need a dialogue management framework, you need an information retrieval or a search engine component,” Raghunathan explained.

Engineers working to create conversational AI systems are left with building or procuring these components, or find alternatives to using them.

Ultimately, the fact that conversational AI has become such a popular concept among the public and the enterprise sector is helping drive interest in developers and machine learning engineers looking to commercialize skills in this domain. “Every year, the number of job postings you see for conversational AI, machine learning, NLP, continue to increase,” Raghunathan said. There also has been an upswelling in offerings in courses, certifications and online training in these areas. “I was talking to my talking my professor at Stanford a few weeks ago where I did my masters. Back when I studied at Stanford between 2008 and 2010, we had about 150 people taking the course on natural language processing,” he added. “Now, I think it’s close to 1000. There is a good demand for it from the job side as well. I definitely think there’s a lot of scope for this in upcoming years.”

About the Author(s)

You May Also Like

.png?width=700&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)