Tradeoffs in power management and signal integrity abound in designing IoT systems that run on battery power and are small in size, but new tools are helping engineers cope with the challenges of high-speed signals.

April 24, 2016

By Brian Fetz

There is a lot of variety in today’s IoT devices. They may be fairly simple devices, such as sensors transmitting simple data or sensors sending large amounts of data (video for instance). They may be single purpose (measuring temperature) or multipurpose (mobile phones), with substantial subsystems such as GPS, WLAN, Bluetooth, and Cellular.

What these devices all have in common is that they can be characterized as small, portable, powered from a battery, and connected wirelessly. ?And therein lies the challenge: The IoT environment demands the longest battery life possible, and system engineers are often forced to make trade-offs to get the current demands as low as possible.

Unfortunately, this strategy ends up being in direct conflict with achieving the highest integrity design. The signal integrity performance of high-speed signals on IoT devices is very susceptible to the battery’s voltage and current performance.

This problem is exacerbated by the fact that data rates will go from hundreds of kHz to Gbps. Higher rates translate to higher power consumption, and here again engineers will turn to topologies that minimize power. When power is considered as a primary objective, other standard design guidelines are disregarded.

For example, transmitters in HDMI (and many standards) have a back-match resistor to minimize the degree of mismatch (which causes reflections). The problem is that to get a voltage to a receiver may take 30 to 100% more voltage swing to drive through that back-match resistor. The upshot? Lost power.

The high-speed MIPI signals for displays and cameras are typically found in these types of devices. These are probably the most power-consuming components of all and particularly problematic for engineers.

Poor signal integrity has a direct impact on data rates. It is possible in some cases, such as USB 3, that receiver errors cause re-transmissions of packets. This reduces the effective data rate, although many times, this isn’t seen because the system is designed to recover. Not specific to new IoT devices, but other signal integrity problems are frequently dropped calls and pixel errors on a TV display. TVs, in fact, are a special case as cable systems are often split multiple times and the operational signal to noise is very, very marginal.

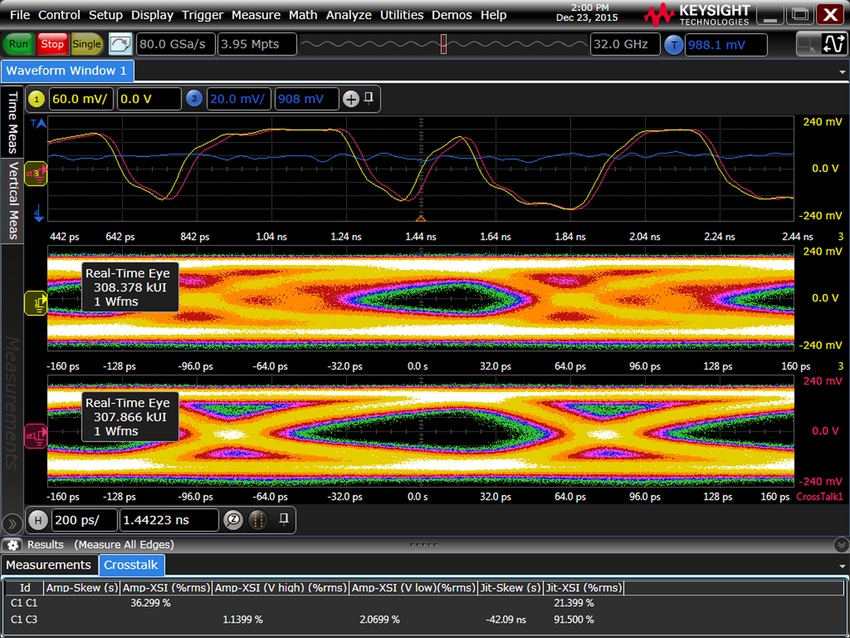

Any noise and instability can cause degradation in high-speed signals. This is why power integrity is also becoming more important for IoT validation. In addition, high-speed signals are laid out more densely in IoT devices, resulting in issues such as crosstalk and coupling.

When a number of subsystems co-exist in this way, interference with one another is real, and optimization of one device will result in compromises in other parts of the design. RF interference and digital crosstalk mechanisms are common challenges.

Although RF interference can often be solved through the use of additional shielding, it can be a costly approach. Alternatively, engineers will need to spend more time validating the interfaces to ensure the high speed signals work correctly at different environmental, temperature and voltage levels.

But wait, there’s more: Given the compressed product life cycles in the IoT space, engineers often skip the prototyping phase so they can get their designs to market faster. At the very least, that requires modeling the environment and measuring and estimating link budgets for digital systems, crosstalk and so on.

So how can engineers be successful in designing for the IoT with an “only one try” approach? Understanding interference effects, link budgets, and so on is critical. And identifying the right tools—testing, probing, and analysis software solutions to quickly identify, quantify and remove cross-talk for analysis and validate designs quickly will be key.

Brian Fetz is a Program Manager at Keysight Technologies.

This article originally appeared in Electronic Design.

You May Also Like

.jpeg?width=700&auto=webp&quality=80&disable=upscale)

.png?width=700&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)